There’s a pattern playing out in most organizations right now.

It’s not in the AI strategy deck.

It’s not in the adoption metrics.

It’s not being discussed in the leadership team meeting.

It’s in the moment before someone hits send.

Someone opens an AI tool.

Types a request.

Gets an output.

Reviews it briefly.

Uses it.

Moves on.

That moment — unremarkable, repeated dozens of times a day, across every person in your organization — is where the most important question in AI adoption goes unasked:

Whose thinking is actually driving this output?

Typical AI Use

When you look at how AI is being used in a typical knowledge-work environment, the tasks are predictable:

- drafting and rewriting

- summarizing and synthesizing

- generating options

- searching and structuring

- framing decisions

- preparing materials

These aren’t edge-case uses. They are the core work of most professional roles.

And AI is genuinely good at all of them — for reasons worth understanding precisely.

What AI Does Well

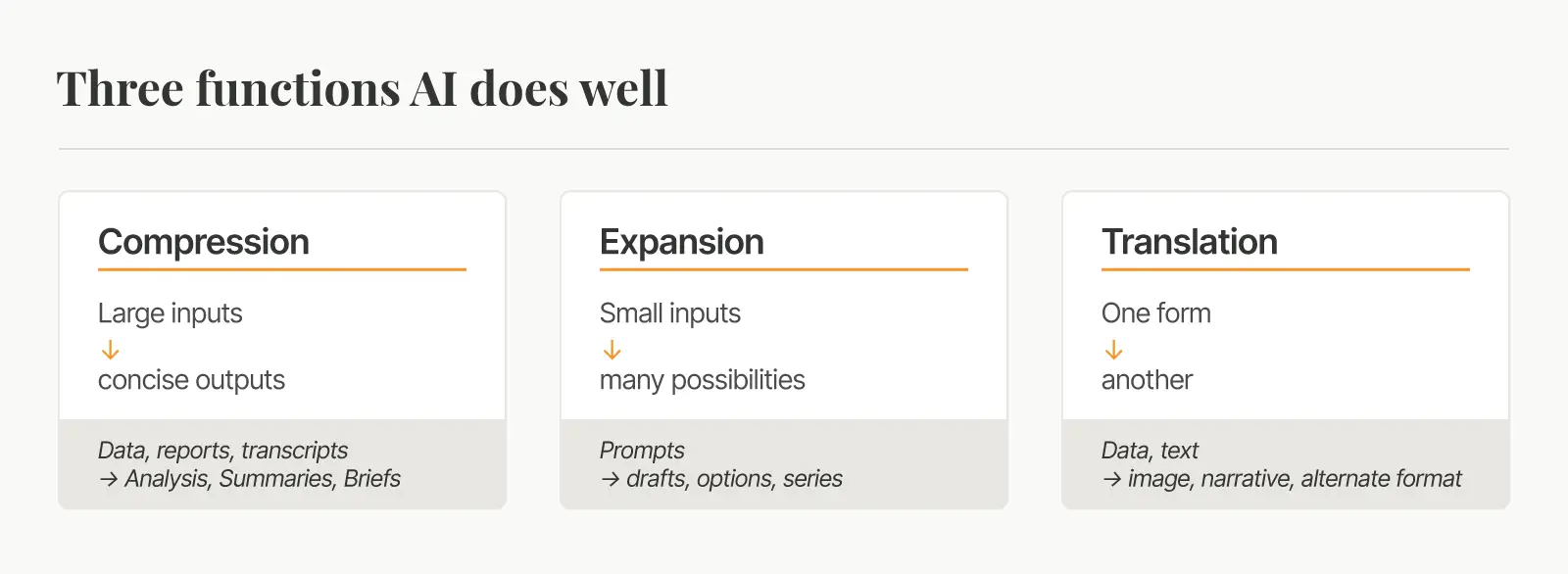

When you look across the full range of daily AI tasks, they collapse into three distinct functions.

Compression

Large inputs become concise outputs. A hundred-page document becomes five key points. A meeting transcript becomes clear action items.

Expansion

Small inputs become many possibilities. A single prompt becomes ten variations. A topic becomes a full agenda. A question becomes a structured framework.

Translation

One form becomes another. Data becomes narrative. Bullet points become prose. A technical explanation becomes an executive summary.

These capabilities are real.

They are valuable.

And they are being used, right now, in some form across your organization.

But there is a critical factor missing in each of them that can only come from human experience.

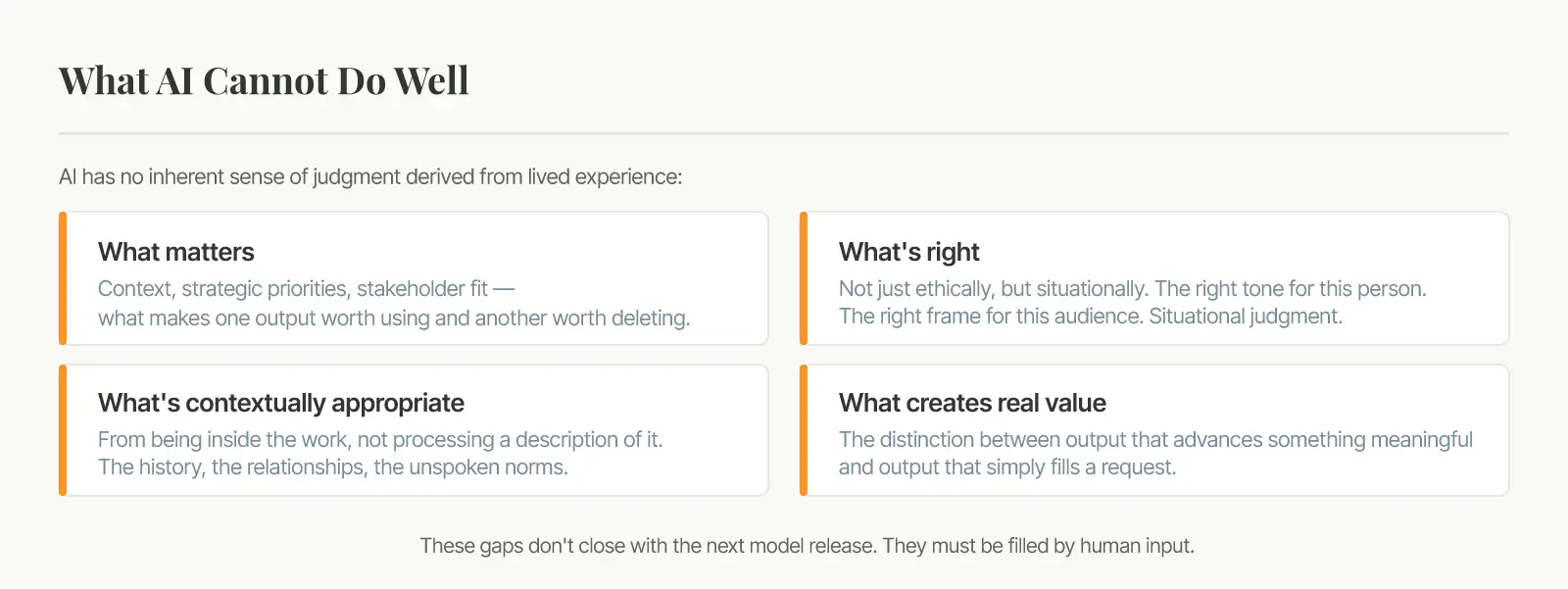

The Judgment and Discernment Gap

No matter what dataset AI has been trained on, it hasn’t lived a life.

It hasn’t read the room.

It hasn’t managed outcomes.

It hasn’t felt the joy of success and the pain of making a difficult decision.

It doesn’t know the difference between what is correct and what is right.

What matters

The organizational context, strategic priorities, and stakeholder relationships that determine whether an output is actually worth using.

What’s right

Not just ethically, but situationally. The right tone for this person. The right frame for this audience. The right move for this moment.

What’s contextually appropriate

The judgment that comes from being inside the work, not from processing a description of it. The history, the relationships, the unspoken norms.

What creates real value

The distinction between an output that advances something meaningful and one that simply fulfills a request.

These aren’t gaps that the next AI model release will close.

They are the nature of human judgment and discernment.

When that clarity is absent, AI doesn't fail. It produces. Fluently. Confidently. At volume.

And that’s when the downstream problem begins.

Small Offloads. Big Consequences.

The issue begins when we passively use AI without inserting human judgment into the equation.

When we move too quickly to consider downstream impacts.

When we offload too much or the wrong parts of a process to AI.

These aggregate into patterns.

And those patterns begin to quietly reshape how your organization thinks, communicates, and decides.

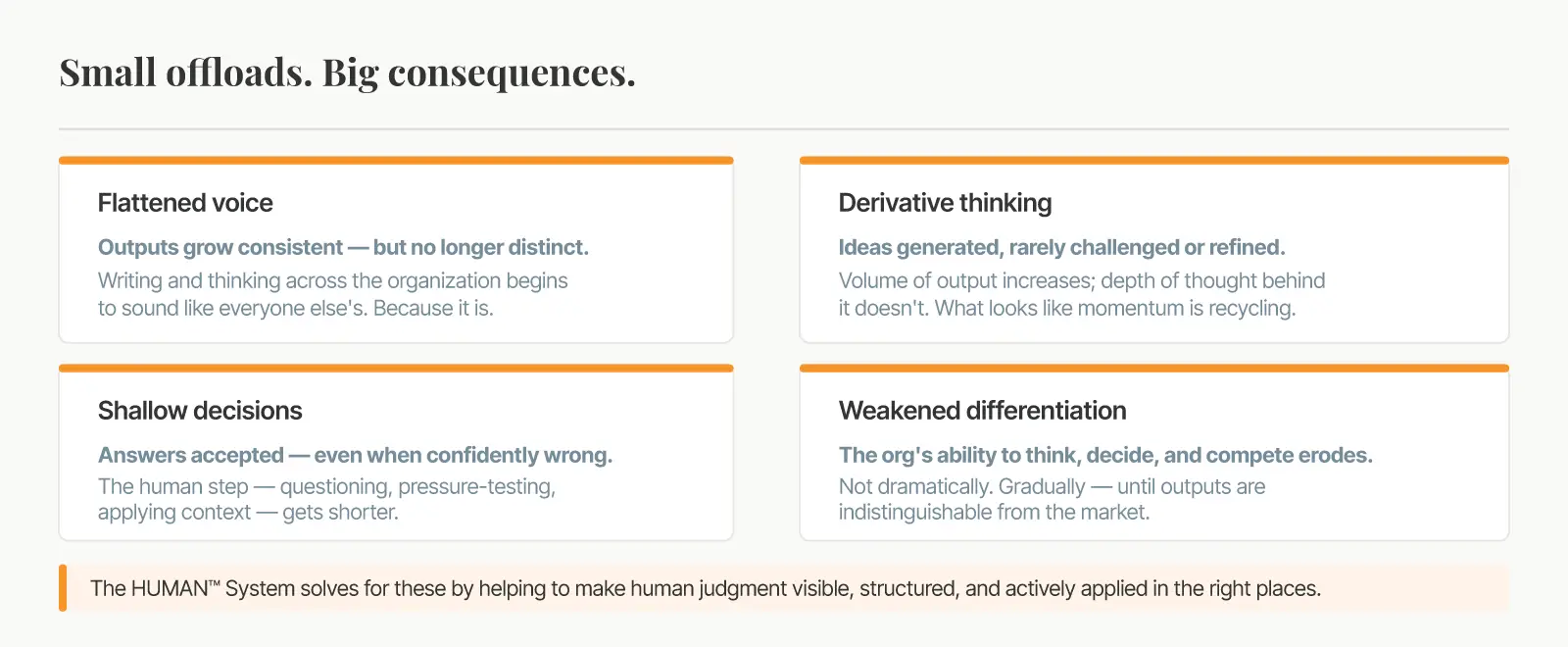

Flattened voice.

Outputs grow consistent — but no longer distinct. Writing, thinking, and communication across the organization begin to sound like everyone else’s. Because increasingly, it is.

Derivative thinking.

Ideas are generated quickly and rarely interrogated. The volume of output increases; the depth of thought behind it doesn’t. What looks like productivity is often recycling.

Shallow decisions.

When AI provides answers with confidence, the human step — questioning, pressure-testing, applying context — gets shorter. Decisions are made faster and challenged less often.

Weakened differentiation.

Over time, the organization’s ability to think originally, decide confidently, and compete on perspective erodes. Not dramatically. Gradually. Until someone notices the outputs are indistinguishable from each other, and from the market.

This is not a technology failure. It's simply amplifying the wrong things — at scale.

Getting the Human-AI Balance Right

The answer isn’t less AI.

It’s clarity on where human judgment is not just preferable — it is the source of the value itself.

The HUMAN™ System is built on a straightforward premise:

If judgment is what AI can’t carry, then we must make human judgment visible, structured, and actively applied in the right places.

That means helping individuals understand:

- How they think, decide, and process information.

- Where their judgment creates genuine differentiation.

- Where AI can support them, and where they need to own decisions.

And helping organizations understand:

- How their people actually create value in work that is increasingly shaped by AI.

- Where human input is a critical variable.

- How to design AI use that strengthens ROI while protecting brand equity.

The HUMAN™ System does that.

Not as a concept. As a practice.

What This Means for You

The question is not whether to use AI.

That decision has already been made.

The question is what you put beside it.

The organizations that get the most from AI won’t be the ones that adopted it fastest.

They’ll be the ones that understood how their people create value — and built that understanding into how AI is used.

AI x H = [ROI]n

We can help you solve for H — the human element in the ROI equation.

Schedule a conversation to discuss options for introducing the HUMAN™ System to your team.